How AI Is Changing Healthcare Education

The tools physicians use to study for boards haven't meaningfully changed in twenty years. I know because I'm still using them — as an assistant professor of ophthalmology and vitreoretinal surgeon at Weill Cornell Medicine, I spend five days a week in clinic, operating and teaching residents and fellows.

I spent four years in ophthalmology residency and two more in vitreoretinal surgery fellowship. Every year meant the OKAP, then the ABO Written Qualifying Exam and Oral Exam — all while running a full schedule. I did the same thing every trainee does: worked through static question banks, highlighted textbooks I'd forget in a week, and tried to figure out on my own which topics actually needed more time. The content was always solid. But the process around it was inefficient in ways that never got better.

I still see it now from the other side — training residents and fellows who are going through the exact same cycle with the exact same tools. The hardest part of board prep was never finding material. It was knowing what you specifically didn't know — and having something that could actually adapt to that in real time. No question bank did. No review course did. You just studied everything and hoped the gaps closed.

That's the problem we built Subspecialty to solve. Not better content — a better intelligence layer on top of the content. And the evidence is starting to show that this approach works.

What static tools get wrong

If you've studied for boards, you know the experience. You work through a question bank linearly. You're strong in retinal vascular disease but weak in uveitis — and the platform doesn't adjust. You answer 300 questions and walk away with a percentage score and maybe a subject breakdown, but no clear sense of what to do next. The learning is passive: the platform presents, you respond, and nothing changes.

This is the fundamental limitation of traditional medical education resources. They can't observe you, identify your specific weaknesses, adjust in real time, or tell you why you're getting things wrong at a conceptual level. They're static. You're not.

What AI actually adds

The value of AI in medical education isn't the content — it's the feedback loop. AI creates a layer that sits between the learner and the material, doing things that static tools simply can't:

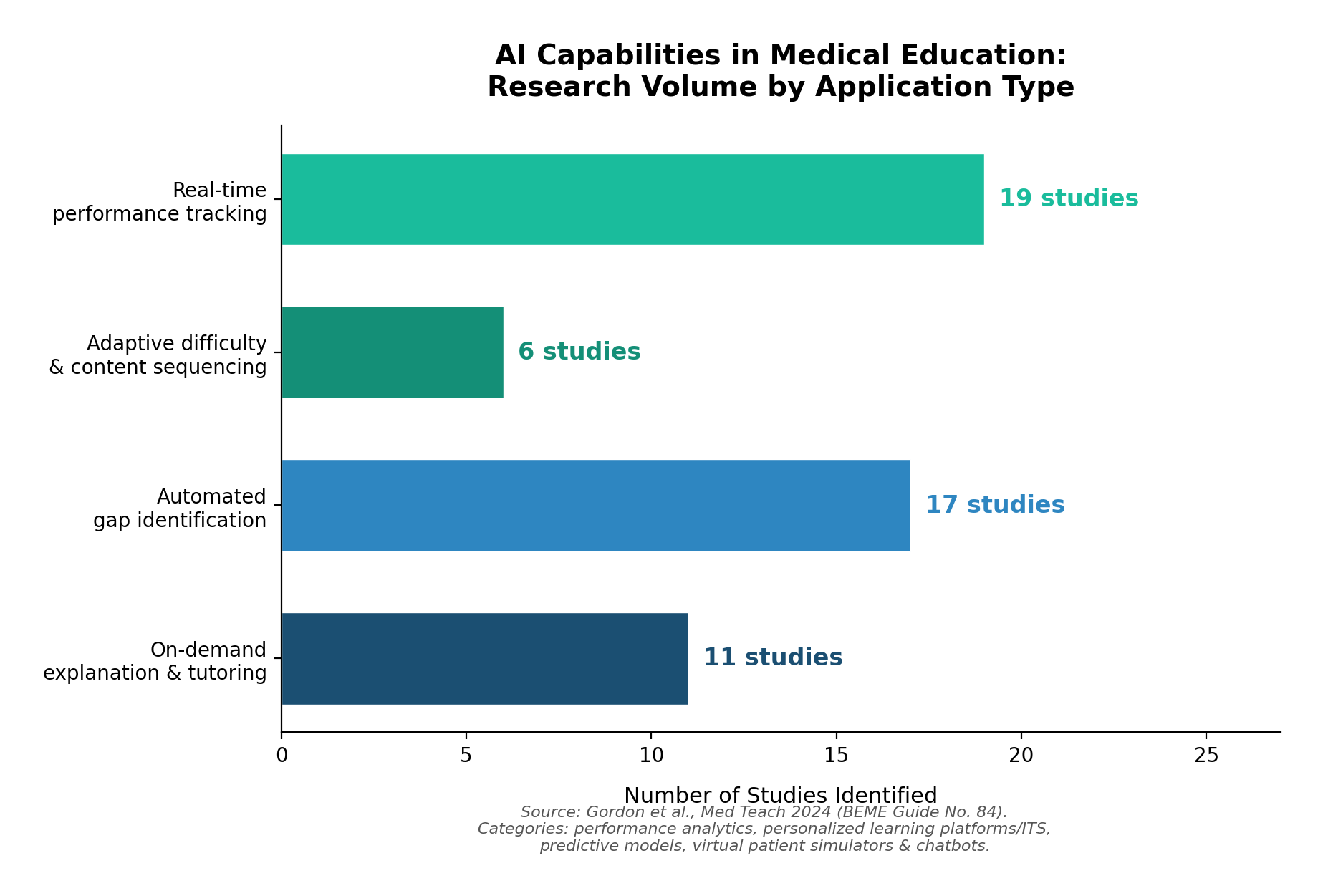

Real-time performance analysis. AI tracks your responses across topics, subtopics, and question types — not just whether you got something right, but how you got it wrong. Did you confuse a mechanism of action? Misread the clinical vignette? Pick the right diagnosis but the wrong next step? This kind of granular analysis turns every question into a data point that informs what you should see next. In the most comprehensive scoping review of AI in medical education to date — covering studies without date or language restriction — performance analytics was the single most common AI application identified, appearing in 19 of the included studies.1

Adaptive difficulty and sequencing. Rather than presenting questions in a fixed order, AI systems can dynamically adjust what you see based on where your understanding is weakest. A 2025 randomized controlled trial found that medical students using an AI-driven adaptive platform showed significantly improved learning outcomes, self-directed learning ability, and engagement compared to students receiving traditional instruction.2 A separate scoping review of 69 studies on personalized adaptive learning confirmed these findings at scale, identifying real-time feedback and self-paced content delivery as the primary mechanisms of benefit.3

Automated gap identification. AI can build a map of what you know and don't know across an entire specialty — then surface the specific areas where your understanding is weakest before you begin a study session. This is different from simply showing you a score breakdown after the fact. It's prospective: the system identifies gaps and routes you toward them. Predictive models for identifying knowledge gaps appeared in 17 studies in the BEME review, making it one of the most actively researched AI applications in the field.1

On-demand explanation and tutoring. AI tutors can explain why an answer is correct, walk through a differential diagnosis, or reframe a concept you're struggling with — available immediately, not during office hours. A scoping review from the University of Auckland found that intelligent tutoring systems demonstrated comparable efficacy to expert-led teaching in multiple contexts, with the added advantage of being available on demand and scalable to any number of learners.4

The evidence isn't just about students

There's a natural tendency to associate AI-augmented learning with medical students studying for Step exams. But the most compelling evidence actually comes from practicing physicians.

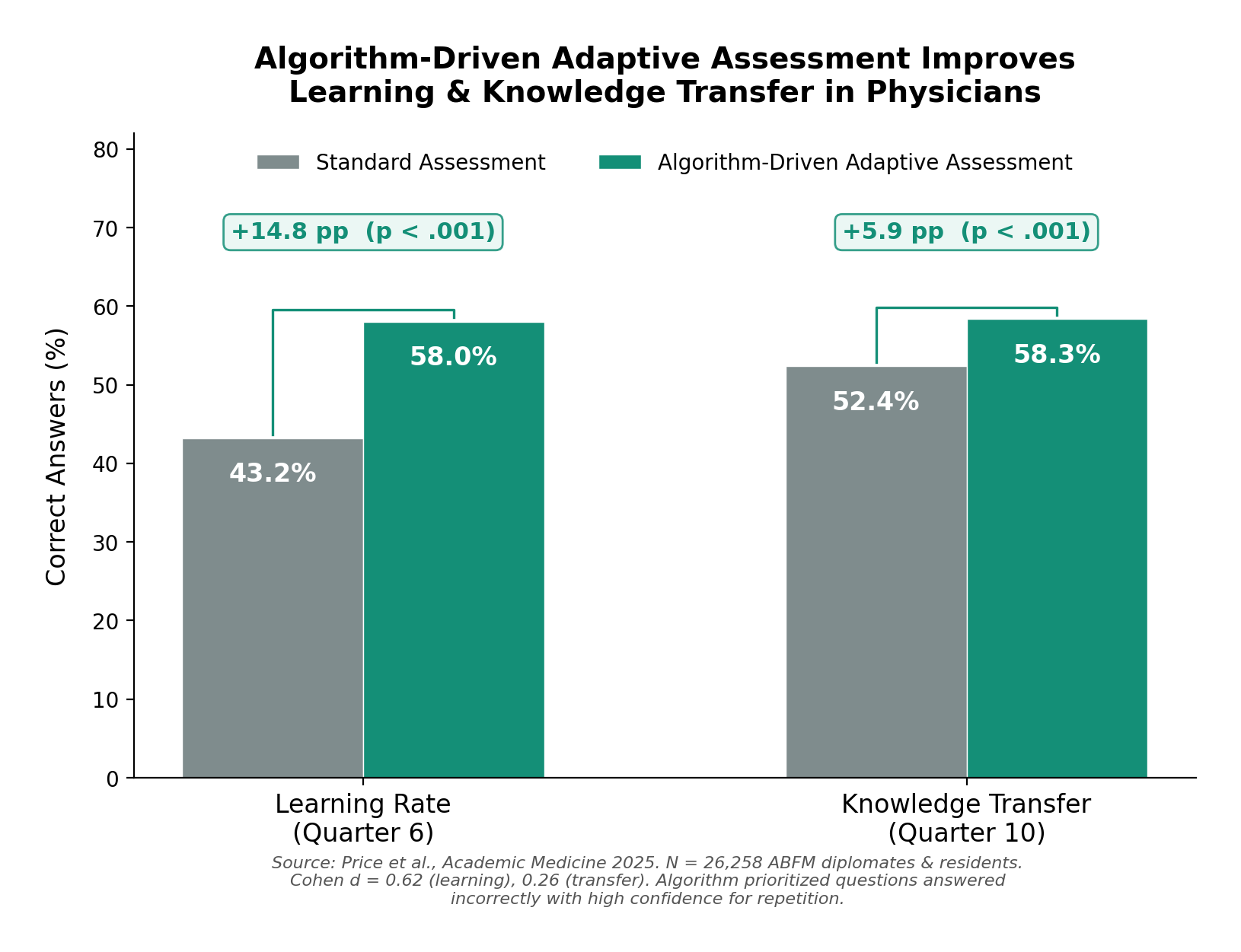

The largest study to date on adaptive, algorithm-driven assessment in medicine enrolled over 26,000 board-certified family physicians and residents through the American Board of Family Medicine. The algorithm identified questions each physician answered incorrectly with high confidence and selectively re-presented them at optimized intervals. The result: physicians in the adaptive group demonstrated a 58% learning rate compared to 43% in controls — a medium-to-large effect size (Cohen d = 0.62). Knowledge transfer to novel clinical scenarios also improved by nearly 6 percentage points.5

The effect was large enough that in January 2026, the ABFM formally integrated this approach into its quarterly Continuous Knowledge Self-Assessment, adding five personalized algorithmically selected questions per quarter alongside 25 new ones.6

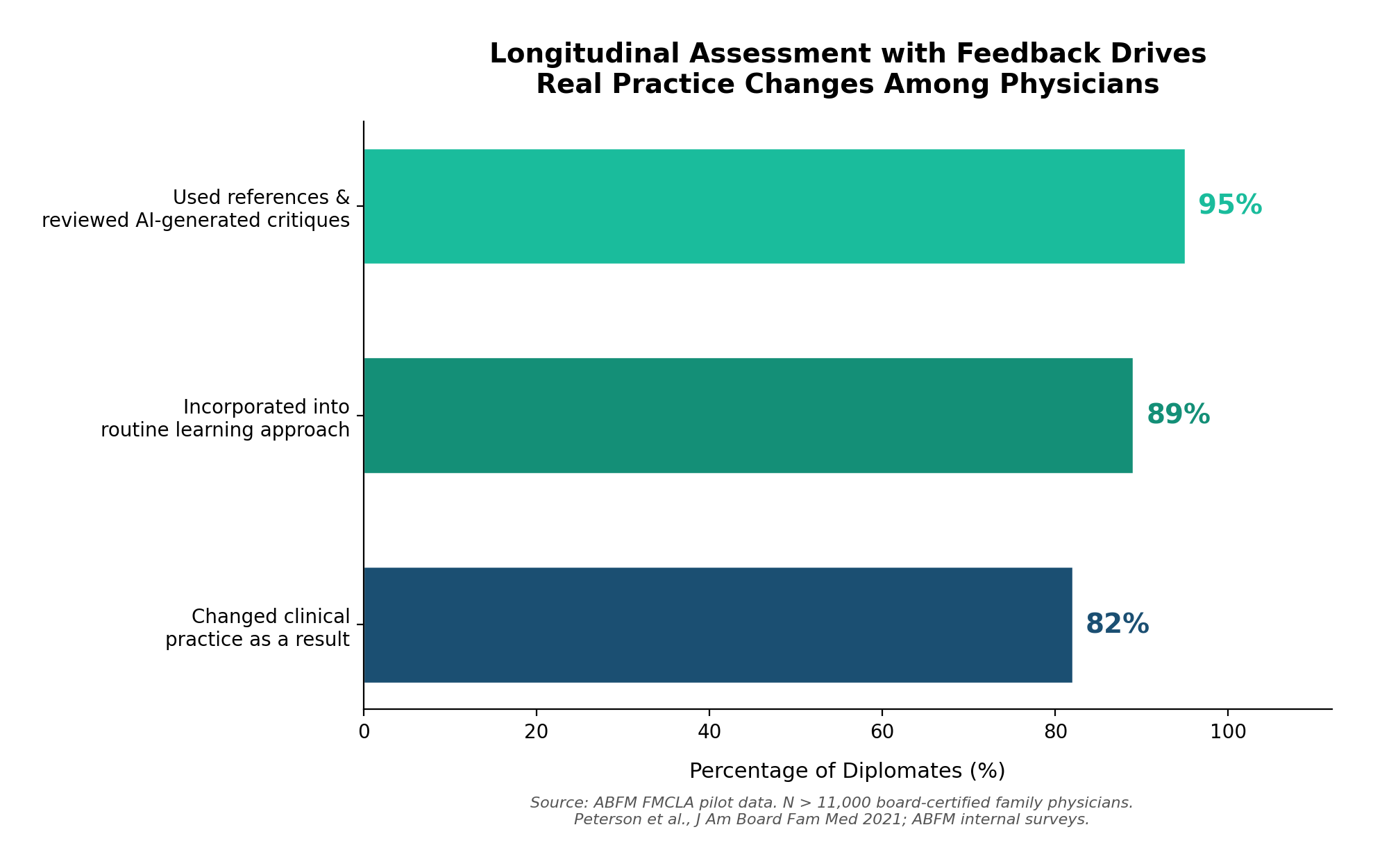

And the impact goes beyond test scores. Among the 11,000+ diplomates enrolled in the ABFM's longitudinal assessment pilot — which provides immediate AI-generated critiques and references after every question — 82% reported changing their clinical practice as a result of participating. Ninety-five percent used the references and reviewed the critiques. Eighty-nine percent incorporated it into their routine approach to staying current.7

These aren't students preparing for a one-time exam. These are practicing physicians, years or decades out from training, who changed how they take care of patients because an intelligent system identified what they didn't know and helped them close the gap.

What AI doesn't replace

It's worth being explicit about what AI should not do in this context. AI can help generate and scale content, but it doesn't determine what constitutes correct clinical practice. It doesn't replace the judgment of a board-certified physician reviewing content for accuracy, or the rigor of primary literature. On our platform, every question is reviewed by physicians before publication, and every explanation links to textbook references and PubMed citations — not AI-generated summaries standing on their own.

The BEME scoping review made this point clearly: across the full landscape of AI applications in medical education, the implementations that worked best were those that kept clinical expertise central and used AI for augmentation rather than replacement.1 The AI layer handles the logistics — tracking performance, adapting content, identifying gaps, generating explanations. The medical knowledge comes from physicians, textbooks, and the peer-reviewed evidence base.

This distinction matters. The goal isn't to hand medical education over to an algorithm. It's to give physicians a tool that learns alongside them — one that knows what they've mastered, what they haven't, and what they should study next.

Where this is going

We're still early. The BEME review noted that most AI implementations in medical education are in early adaptation phases, with few achieving deep longitudinal integration.1 Rigorous randomized controlled trials remain scarce, and most of the evidence comes from single-site studies.2 But the direction is clear.

Board certification bodies are already moving. The ABFM's adoption of algorithm-driven assessment is the most visible example, but the American Board of Medical Specialties has reported similar patterns — 94% of participating internists reported learning from the feedback, and 49% made or planned practice changes based on participation.8

For trainees, the implications are more immediate. The volume of material required for specialty boards is enormous, and study time during residency and fellowship is limited. An AI layer that can identify exactly where your understanding is weakest — and route you there — isn't a luxury. It's a meaningful efficiency gain.

We built Subspecialty around this idea: that the intelligence layer matters as much as the content layer. But regardless of which platform you use, the underlying principle is the same. Medical education tools should know what you know, adapt to what you don't, and get smarter every time you use them. The evidence says that works. The question is how fast the field adopts it.

References

- 1.Gordon M, Daniel M, Ajiboye A, et al. A scoping review of artificial intelligence in medical education: BEME Guide No. 84. Med Teach. 2024;46(4):446–470.

- 2.Chen Y. Evaluation of the impact of AI-driven personalized learning platform on medical students' learning performance. Front Med. 2025;12:1610012. PMC

- 3.du Plooy E, Casteleijn D, Franzsen D. Personalized adaptive learning in higher education: A scoping review of key characteristics and impact on academic performance and engagement. Heliyon. 2024;10(21):e39630. PMC

- 4.Shaw K, Henning MA, Webster CS. Artificial intelligence in medical education: a scoping review of the evidence for efficacy and future directions. Med Sci Educ. 2025;35(3):1803–1816.

- 5.Price DW, Winward ML, Newton WP, et al. The effect of spaced repetition on learning and knowledge transfer in a large cohort of practicing physicians. Acad Med. 2025;100(1):94–102. Oxford Academic

- 6.American Board of Family Medicine. Advancing CKSA: Integrating spaced repetition to strengthen knowledge retention. Published January 2, 2026. theabfm.org

- 7.Peterson LE, Fang B, Phillips RL, Avant R, Puffer JC. The evolution of knowledge assessment: ABFM's strategy going forward. J Am Board Fam Med. 2021;34(Suppl):S49–S54. PMC

- 8.American Board of Medical Specialties. Board certified physicians say assessments support learning. Published October 2025.